The Evolution of Pony.ai’s World Model

From virtual training grounds for AI to a self-improving physical AI engine

In March 2016, AlphaGo beat Lee Sedol and showed the world what artificial intelligence could do. It was a defining moment for AI — and part of what kicked off the modern wave of investment and innovation. Pony.ai was founded that same year.

Back then, a lot of people thought autonomous driving would be pretty straightforward: give AI enough labeled data, teach it to see the world like a human, and human-level driving would follow.

That turned out to be wrong.

Driving is much harder than image recognition. A 99% success rate is fine for classifying photos. In L4 autonomous driving, it is not even close. That remaining 1% is where red lights get run, collisions happen, and bad calls get made. The bar is also simply higher for AI than it is for humans. When a person makes a driving mistake, it is usually not news. When AI does, it always is.

Driving is also deeply interactive. It is not just about seeing the world correctly. It is about making safe, smooth, and efficient decisions while other drivers, cyclists, and pedestrians are all reacting to you in real time.

That is partly why true driverless autonomy took longer than most people expected. It is also why scale matters. A small number of vehicles can get by on luck. A large fleet running on public roads without frequent incidents — that is what actually starts to prove statistical safety.

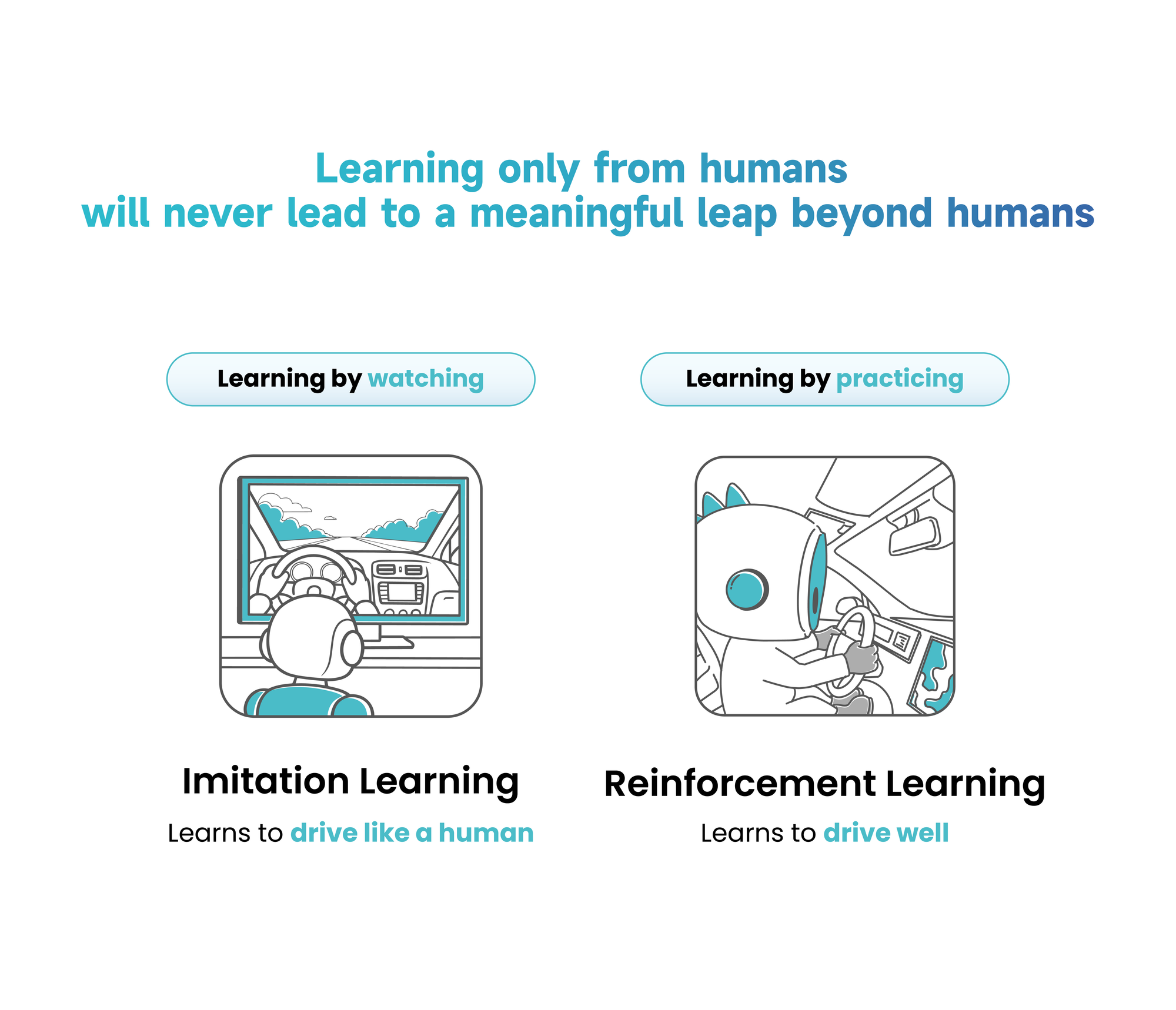

Driving well, not driving like a human

As the industry looked for a path to full driverless capability, two camps formed. One kept betting on human driving data: more examples, more edge cases, better model.

Pony.ai did not see it that way.

Driving differently from a human does not mean driving wrong. And driving like a human can still be dangerously off in subtle ways.

For L4, the goal was never to mimic humans. It is to drive well — safe, comfortable, and efficient.

That distinction changes everything.

L4 has no human fallback. Unlike L2, it cannot depend on a driver stepping in. A system that beats humans in nearly every case but goes wrong in the few that remain still is not good enough.

Once the target shifts from “drive like a human” to “drive well,” imitation learning stops being enough.

It becomes a reinforcement learning problem.

That is where PonyWorld began.

What a world model actually is

By 2024 and 2025, the evidence was clear. Large commercial robotaxi fleets were running in multiple cities. The argument that more human driving data would eventually carry L2 systems to true L4 was getting harder to make. More companies started moving toward reinforcement learning and world models.

By 2026, that was the consensus in both the US and China. Pony.ai had been there for years.

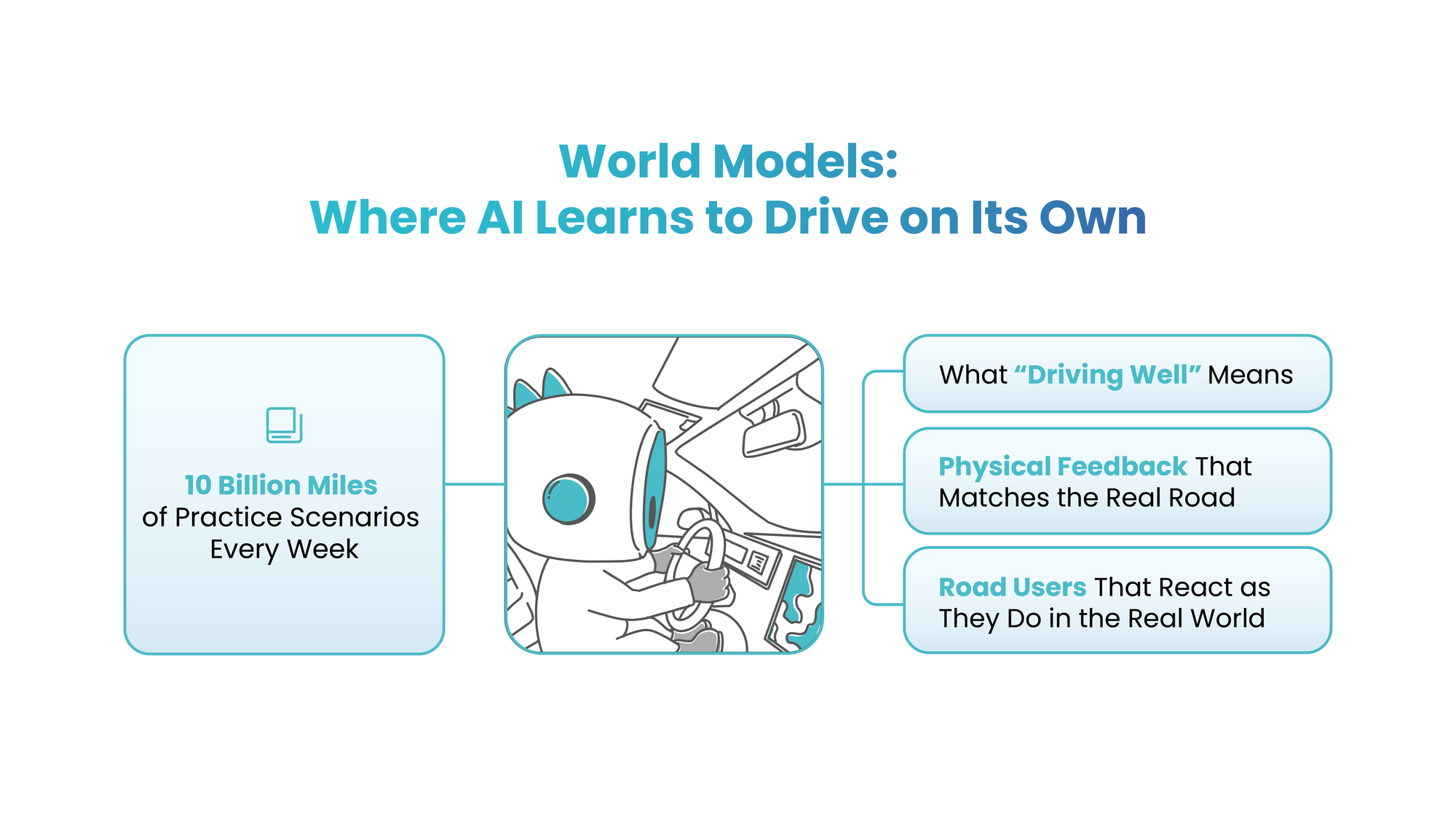

Even now, the phrase “world model” gets misunderstood a lot. Many people picture a simulator — like a realistic game engine generating synthetic data to train an AI driver.

That is not what Pony.ai built.

PonyWorld is not a single module. It is a full system — from cloud-side training to onboard model deployment. Pony.ai has been building it since 2020, and it is already running in production today.

For a world model to actually work, it needs to do three things:

- Define what good driving means.

That is the reward function in reinforcement learning. It has to be learninh based, not rule based. - Build a high-fidelity model of the physical world.

That accurately captures the kinematics of the ego vehicle and all surrounding traffic participants. - Model interaction.

This is the critical one. Driving is not just about generating edge cases. A useful world model has to capture how the world reacts to the AI driver — in both long-tail scenarios and everyday traffic.

If the AI starts to merge and there is a car in the next lane, that driver might yield, speed up, hesitate, or hold their ground. Those behavioral patterns have to exist in the training environment.

All three together are what Pony.ai calls world model accuracy.

Once the first generation of PonyWorld was deployed, improving autonomous driving increasingly became about improving world model accuracy.

But only when the world model is accurate enough in all three of these aspects can it produce positive training outcomes for the AI driver; otherwise, the driving policy may learn from unrealistic scenarios, drift toward the wrong behaviors, and even underperform imitation learning trained on massive amounts of human driving data.

Why AI driving data beats human driving data

One of the hardest parts of building a world model is not simulating the road. It is simulating how the road responds to an AI.

That is where human driving data starts to lose value.

A world model built only on human data can simulate how people respond to people. But once AI driver begins to exhibit driving behaviors that differ from those of human drivers, surrounding traffic reacts differently. You cannot estimate that. You have to observe it.

The only way to learn it is to put AI on the road.

That is why, past a certain point, the most valuable data is no longer human driving data — it is AI driving data.

Once the AI moves beyond human performance on safety, only AI-generated real-world data can keep improving the world model in the ways that matter. Other road users do not respond to AI the way they respond to human drivers. A world model trained only on human data will always miss that.

At Pony.ai, some of the biggest safety gains did not come before fully driverless operations. They came after driverless vehicles were already on the road at real scale.

By then, the AI was strong enough that the data coming in was far more useful — for world model accuracy, and for the onboard model itself.

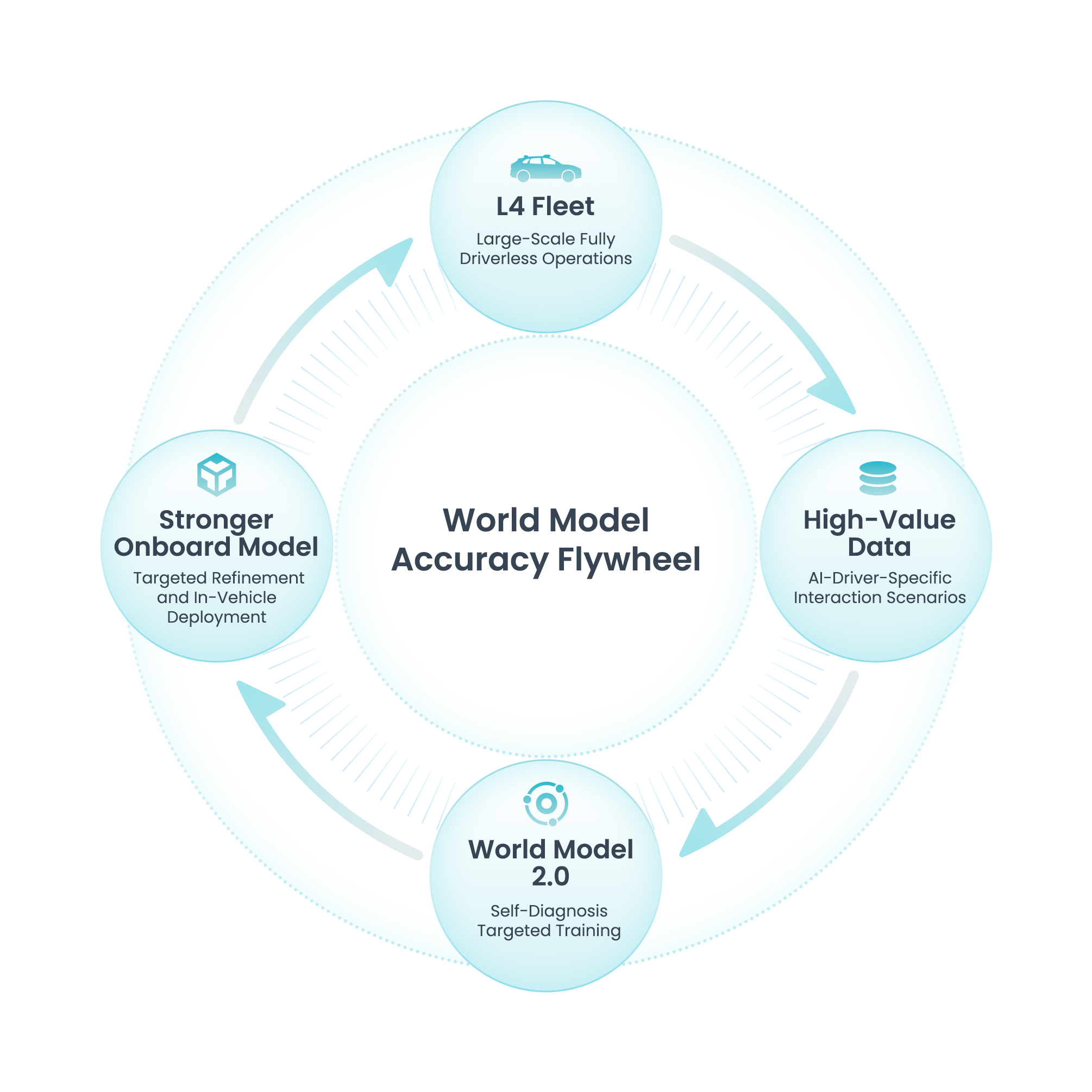

The flywheel — and the moat behind it

This is where the structural moat starts to appear.

Once the AI driver moves beyond the level of an ordinary human driver, human driving data stops being useful for improvement. It is like asking a top Go player to study amateur games. It will not help. They need harder opponents and positions they have never seen.

In autonomous driving, those situations come from one place: large-scale L4 driverless fleets in real traffic.

This data is uniquely valuable. The AI drives on its own in live conditions. It encounters situations human drivers do not, because traffic behaves differently around it. Those patterns cannot be replicated any other way.

That creates a flywheel:

More driverless operations → better data → better world model → stronger onboard model → broader L4 deployment → even better data

Once that loop starts, it compounds.

- The data becomes proprietary.

- Improvement becomes self-guided.

- The loop gets more efficient as it scales.

Companies without large-scale L4 driverless operations cannot start this loop — and they cannot catch up just by buying more GPUs, hiring more labelers, or training longer on L2 data.

This is not just a technical edge. It is a structural moat.

Why Pony.ai did not put language in the middle

At one point, a popular idea was to put a vision-language-action(VLA) model between perception and action — describe the scene in words first, then decide how to drive.

Pony.ai did not think that was right.

A good driver does not narrate a sentence before making an emergency maneuver. Driving runs on real-time spatial understanding and near-instinctive responses. Language is too slow and too lossy for that job. It compresses a rich physical environment into symbols and throws away too much.

So Pony.ai chose a more direct path:

sensor data maps straight to driving action

That saves compute. But the bigger advantage is focus — the system can spend its budget on what actually matters:

- understanding the world

- anticipating what is next

- making better decisions

That efficiency matters in production. On Pony.ai’s seventh-generation Robotaxi, the entire onboard compute platform delivers just 1016 TOPS.

The primary system is built on three NVIDIA DRIVE Orin-X SoCs, while a separate redundant system runs on a fourth Orin-X.

That redundant setting is capable of completing the driving task independently, so even if the primary system fails, the vehicle can continue operating safely and pull over at an appropriate location.

It also keeps the connection between physical-world data and physical-world modeling clean. When the onboard model is more efficient, the training loop gets more efficient too.

Intention: making the model readable and useful to itself

To improve the iteration loop, Pony.ai introduced an Intention layer during training.

Earlier versions of the onboard model took in sensor data and output steering, braking, and throttle. They drove well, but what was happening inside was hard to read.

Later versions started generating a structured representation of intent alongside each driving action. In plain terms, something like:

“I’m slowing down before the intersection because the pedestrian to the right looks like they’re about to cross.”

This is not a separate model explaining things after the fact. It is not a language model in the inference pipeline either — that would simply bring the same problem back.

Intention is learned jointly with the driving action during training. It keeps what the model thinks aligned with what it does.

That brings three benefits:

1. Auditability

Engineers do not have to guess what the network was doing. The Intention layer gives them a readable summary of each decision.

2. Debuggability

When something goes wrong, engineers can ask a sharper question:

- Did perception miss it?

- Did the model see it but get the risk wrong?

- Or was the intent right but the execution off?

3. Iterability

Most important of all, once the system can express its own intent, it has the foundation for self-diagnosis.

When it spots that its intention is consistently weak in certain scenarios, it has already started working on the problem.

PonyWorld 2.0: from random evolution to targeted evolution

This is where PonyWorld 2.0 begins.

Once improving autonomous driving becomes about improving world model accuracy, the obvious move is to keep collecting L4 data and refining the system. But as the fleet grows and the world model matures, most new data stops being equally useful. A lot of it just adds storage and filtering costs without much learning value.

There is a second problem too. Once AI driving moves well beyond human level, human guidance can become unreliable. Sometimes it is simply wrong.

PonyWorld 2.0 changes that logic.

With the Intention layer in place, the onboard model can now say, in structured form, why it made a decision. That unlocks a much more important capability: self-diagnosis.

The system can review its own decisions at scale and compare intent with outcome. It can ask three questions:

- Was the intention right, but the action execution off?

- Was the intention itself wrong?

- Or was the intention wrong because the simulated interaction failed to match the real one — meaning the world model itself was inaccurate?

The first two cases help the world model train the onboard model more efficiently. In practical terms, the system can spend more time on the problems it has not mastered and stop wasting time on the easy ones.

The third case is the real breakthrough.

It tells the system where the world model itself needs to improve.

That is the core leap in PonyWorld 2.0: improving world model accuracy is no longer broad and undirected. It becomes targeted.

When AI starts telling humans what data to collect

PonyWorld 2.0 does more than improve the onboard model more efficiently. It also makes world model improvement itself more efficient by telling humans what data to collect next.

Suppose the system discovers that performance becomes unstable at several intersections in a particular city during late-afternoon backlighting, especially in mixed traffic involving pedestrians and two-wheeled vehicles.

It can generate a targeted data collection task for the testing and operations team.

The team then collects those real-world samples, uploads them to the cloud, and uses them to calibrate the scenario generation model. PonyWorld can then create more realistic training data for targeted fine-tuning.

At that point, humans are no longer acting mainly as AI’s teachers. They are becoming operators in an AI-driven improvement loop.

R&D, testing, and operations all begin to organize around the world model’s own accuracy needs.

- Where the model is weak, humans go gather data.

- When it asks for more examples of a scenario, humans go find that scenario.

“Engineers are working for World Model 2.0” sounds like a joke. It is not. It is a concise description of a new R&D paradigm.

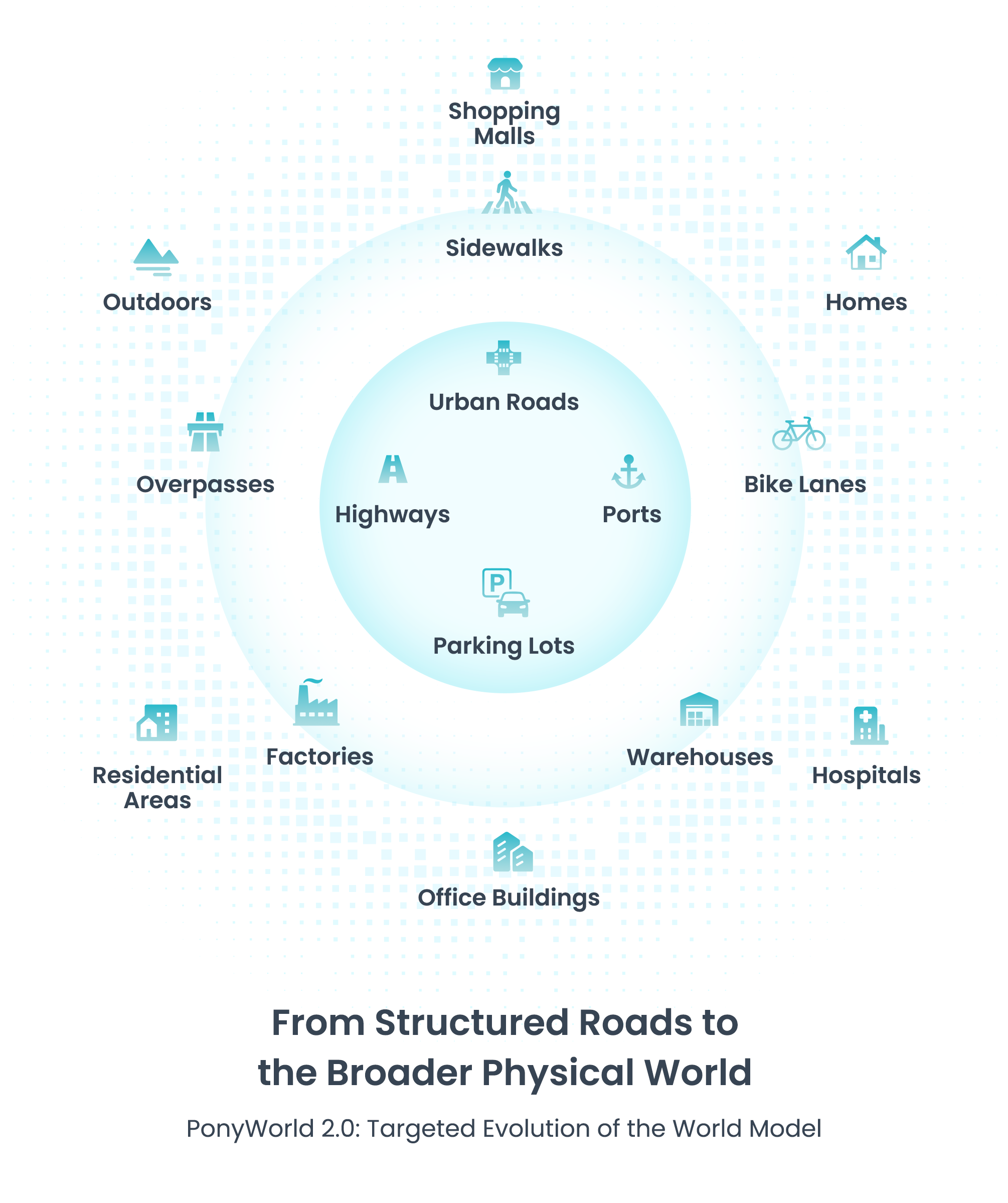

Beyond autonomous driving

As tens of millions of kilometers of autonomous driving data continue to refine PonyWorld — including large-scale fully driverless robotaxi data and robotruck data from highways and ports — the model becomes more aware of the limits of its own coverage.

Today, that coverage is still centered on structured road driving.

Ask the model what it still lacks, and it may tell you it needs more data from a specific city or a newly launched geography. But it may also point to something larger:

- sidewalks

- bike lanes

- pedestrian overpasses

- indoor environments

That is a revealing moment.

PonyWorld today is an autonomous driving world model. It does not have indoor data. But the underlying capability is not inherently limited to autonomous driving.

A world model that can evolve on its own and improve its accuracy efficiently is not only useful for driving. Its ability to expand scenario coverage and raise simulation quality may also matter for physical AI problems that are far more complex than structured road traffic.

Data will never be enough. Compute will never be enough either. In the long run, efficiency becomes one of the most important variables in AI progress.

That is true in autonomous driving, where safety has to exceed human drivers by a wide margin. It is even more true in broader physical AI, where the environments are more complex still.

In both cases, targeted evolution is not optional. It is essential.

Only a world model that can evolve in a directed and self-driven way can support higher-dimensional, higher-complexity physical AI training. Only that kind of system can eventually help AI achieve superhuman capability in tasks far beyond driving.